What is the Zipfian distribution telling us about search ingestion?

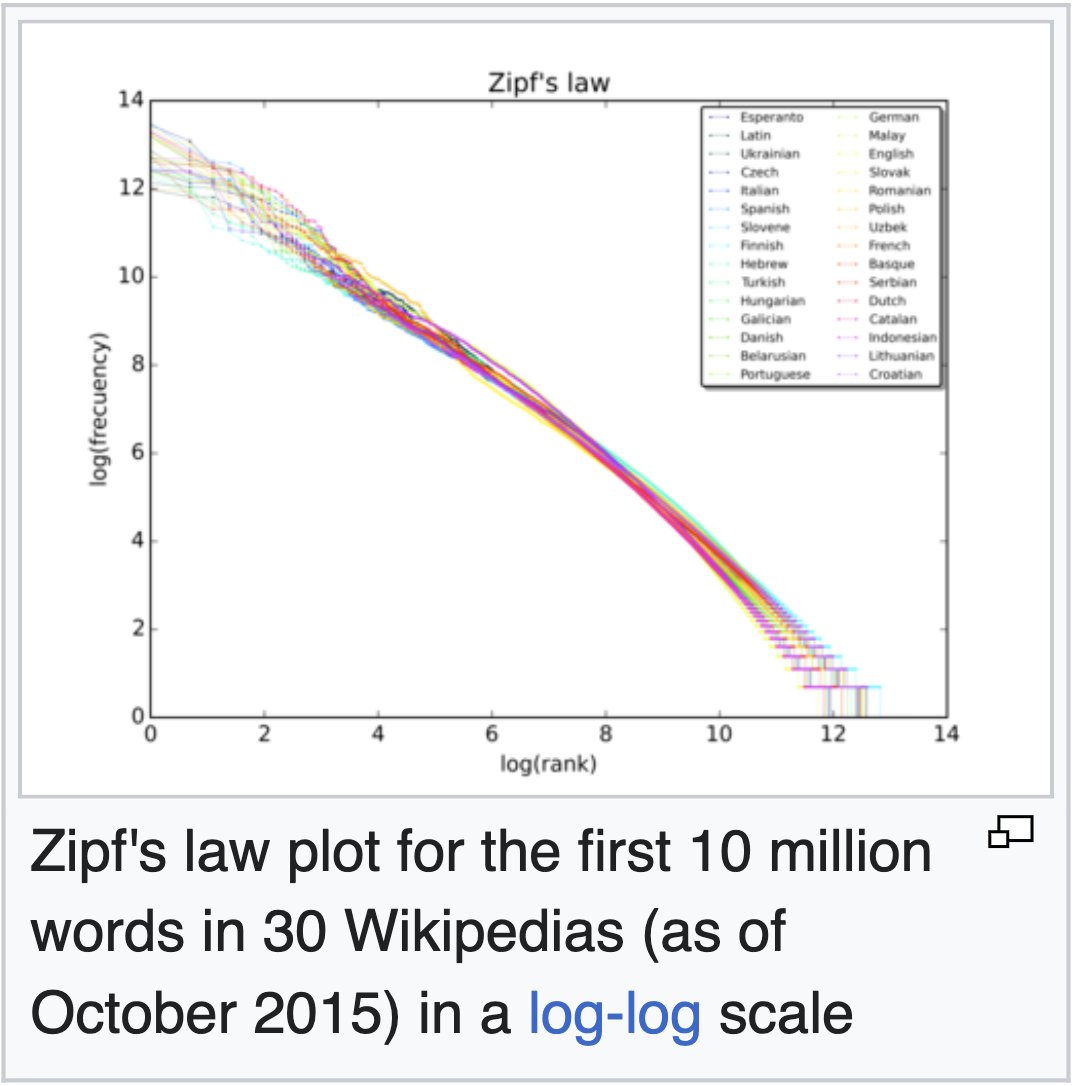

I think it was my friend Jeremy that first pointed out (to me) the Zipfian distribution and what it says about the distribution of words in a corpus. It’s a neat insight into linguistic patterns, and a gateway to learning about interesting power laws arising in nature.

In search, you can note how “the” and “of” are the most popular words in English. And with a few more steps you can drop stop words from your index and really improve things like processing and index size (with some tradeoffs).

But what about the distribution of how often any given document is needed within a company?

Is it possible that a relatively small set of documents covers most of the interesting questions and information seeking needed in day to day work?

Why do enterprise and other search domains spend so much up front compute to ingest, process, and index all documents. Compute waste go brrrrrr.

Is it possible there’s a better approach?